Although experimentations on human subjects often prove to be ethically questionable, they have been carried out for almost two centuries and are now under strictly controlled and regulated by law.

What Is Human Experimentation?

Human experimentation is a systematic, scientific investigation where human beings serve as subjects in either medical (clinical) or non-medical research. Human subject research can be interventional or observational. This research method led to many revolutionary advances ever since its first use at the end of the 18th century.

Observational vs Interventional Research

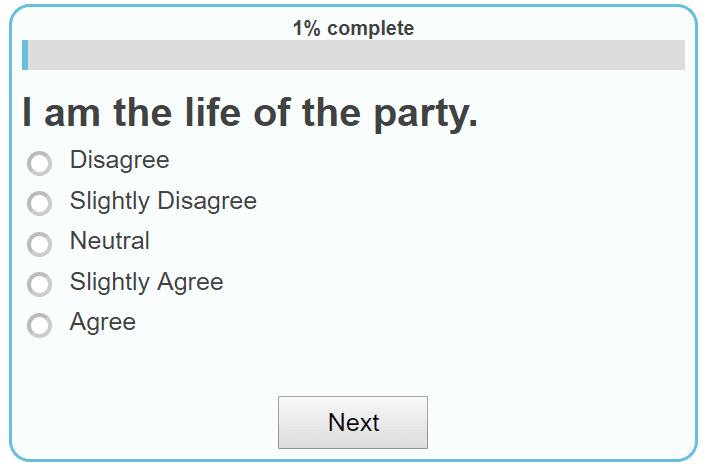

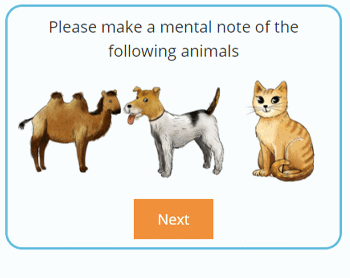

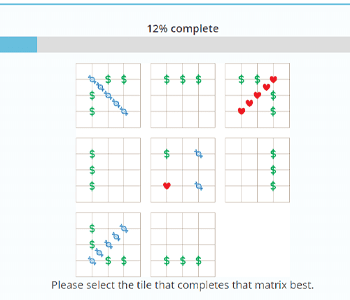

In an observational research, investigators record their observations and analyze data without administering an intervention. Observational studies focus on aspects such as risk factors, disease progression, and disease treatments. Human subject research in the social sciences, for example, may involve surveys, questionnaires, interviews, and focus groups.

On the other hand, in an interventional research, investigators manipulate the subjects or their environment in order to modify specific processes or results. The most common human intervention studies are clinical trials in which new drugs and vaccines are being evaluated.

Examples of Human Experimentation

Human experiments were used extensively throughout the twentieth century. They were subject to both fame, controversy, and rage. Let’s have a look at some of the best-known experiments performed on humans.

The smallpox experiment

The earliest known human experimentation was done in 1796 by English physician Edward Jenner, famous for developing the world’s first vaccine.

As a country doctor, Jenner was aware of the fact that milkmaids rarely caught smallpox. However, since they were in frequent contact with cows, they often contracted cowpox. Jenner speculated that cowpox produced immunity against smallpox. To prove this theory, he injected fluid from a cowpox infection into the skin of his gardener’s son, eight-year-old James Phipps. When several weeks later Jenner exposed the boy to smallpox, he found that James has indeed become immune to the disease.

Following Jenner’s model, scientists in the 19th and 20th centuries developed new vaccines to fight many deadly diseases including polio, measles, and tetanus.

The Tuskegee experiment

In 1932, scientists at the Tuskegee Institute in Alabama started studying the natural progression of syphilis, a disease that represented a major health problem at the time. Six hundred black men were enrolled in the project that lasted for four decades; two-third of them had the disease.

The subjects of the study, officially known as the Tuskegee Study of Untreated Syphilis in the Negro Male, were not informed about the research. Instead, they were led to believe that they were receiving treatment for "bad blood"—a term that was used to describe several serious illnesses at the time—and promised free medical care and burial insurance as an incentive.

The men were given only placebos such as aspirin and mineral supplements. They were not treated for syphilis, although penicillin became an effective cure for the disease in 1947. As a result, many participants died from complications of syphilis. The survivors were given treatment in 1972, after the nature of the study became publicly known.

Henrietta Lacks

Henrietta Lacks was a poor and uneducated African American tobacco farmer from Baltimore, Maryland with cervical cancer. In 1951, scientists at Baltimore’s Johns Hopkins Hospital collected cells from her tissue sample without her knowledge.

Henrietta’s cells, nicknamed HeLa cells, soon became invaluable in medical research. These were the first cells to be successfully kept alive and cloned. They were essential in developing the polio vaccine and were sent to space in the first space missions to see how they would be affected by zero gravity. HeLa cells were also used in gene mapping, in vitro fertilization, and countless other scientific endeavors.

The Milgram experiment

In 1961, Yale University psychologist Stanley Milgram carried out what has become one of the best-known studies of obedience in psychology. Milgram conducted a series of experiments to determine to what extent people are willing to obey instructions that involve harming others.

Participants in Milgram’s experiment were asked to be "teachers" to a group of people placed in a separate room. They were instructed to administer an electric shock to “learners” every time they answer a question incorrectly. With every new incorrect answer, they were to increase the intensity of the electric shock, without realizing that the shocks were not real.

Despite Milgram’s expectations that no one would accept administering strong electric shocks to the learners, to his surprise, 65% of participants obeyed the instructions until the very end of the experiment, going all the way up to 450 volts.

The Bystander Effect

When 28-year-old Kitty Genovese was killed outside her apartment in New York City in 1964, it was reported that none of her neighbors stepped in to assist or call the police. A few years later, social psychologists Bibb Latane and John Darley decided to do a series of experiments to demonstrate this psychological phenomenon known as the bystander effect.

The participants in Latane and Darley’s experiment were confronted with several types of emergencies, like witnessing a seizure or smoke entering through air vents. The psychologists found that the larger the number of witnesses or “bystanders”, the more time it took for people to respond to the emergency. The experiment showed the diffusion of responsibility, that is, when surrounded by others, people expect someone else to take action. The lack of action was also a result of the social influence effect where individuals observe the behavior of those around them before deciding how to act.

The Stanford Prison Experiment

Psychologist Philip Zimbardo was the author of the infamous 1971 social psychology experiment that investigated the psychological effects of perceived power. Zimbardo was interested in finding out whether the brutality reported among guards in American prisons was due to their personality traits or was mostly situational and had to do with the prison environment.

Zimbardo converted a basement of the Stanford University psychology department into a “prison” and recruited volunteers to take part in a study of the psychological effects of prison life. Prisoners were arrested at their homes without warning and taken to the local police station, after which they were blindfolded and put in prison. Guards were instructed to do whatever was necessary to maintain law and order among prisoners except for resorting to physical violence.

The Stanford prison experiment revealed that people readily conformed to the stereotypical social roles they were expected to play. When they were placed in a position of authority, prison guards began to act in ways they would not usually behave.

Growth hormone therapy

The human growth hormone (hGH) was originally made available in the late 1950s to treat hormone-deficient children who would otherwise remain extremely short. Until the 1980s, only children lacking the hGH were eligible to receive the treatment.

With the rise of genetic engineering, however, the hormone has become more readily available. At the National Institutes of Health (NIH), the growth hormone has been administered also to perfectly healthy children who are short for their age, in spite of the fact that the procedure poses significant physical and psychological risks.

Ethics of Human Experimentation

There is no doubt that research involving human subjects is indispensable and has led to an improvement in the quality of lives and numerous medical breakthroughs. At the same time, as the above examples show, human experimentation has often been on the limit of what is ethically acceptable.

When Is Human Experimentation Criminal?

Jenner’s vaccine experiment was fortunately successful, but exposing a child to a deadly disease in the name of medical research is today considered as unethical. The HeLa cells and Tuskegee experiments have been cited as examples of racial discrimination in science. The Stanford study has been heavily criticized as unethical due to its lack of fully informed consent by prisoners to whom the arrests came as a surprise. The NIH treatment of short children is often seen as a profitable pharmacologic solution to what is fundamentally a social problem.

In addition, in order to ensure sufficient participation in research, human experimentation was frequently done among the most vulnerable population groups such as prisoners, poor people, minorities, mental patients, and children. Bill Clinton, for example, apologized to the communities affected by the Tuskegee experiments.

So how can researchers achieve a balance and justify exposing individual human subjects to risk for the sake of the advancement of science?

Ethical guidelines for human research

Ethical guidelines for regulating the use of human subjects in research were developed in response to numerous unethical experiments carried out throughout the 20th century. In the past sixty years, there has been a rapid emergence of various codes, regulations, and acts to govern ethical research in humans. In addition, several organizations were put in place to help monitor human experimentations.

The Nuremberg Code

The Nuremberg Code is a set of international rules and research ethics principles that were created to protect human test subjects. The code was established in 1947 as a result of the Nuremberg trials at the end of the Second World War. Originally, the code aimed to protect human subjects from any cruelty and exploitation similar to what the prisoners endured during the war.

The Nuremberg Code states that the voluntary consent in research is essential and that participants have the right to ask to end treatment at any moment. Furthermore, treatments can be carried only by licensed professionals who must terminate their study if the subjects are in danger.

The Nuremberg Code remains the most important document in the history of the ethics of medical research. It serves as a blueprint for today's principles that ensure the rights of subjects in human experimentation.

The Belmont report

The Belmont Report was established in 1978 by the National Commission for the Protection of Human Subjects of Biomedical and Behavioral Research. The report describes the ethical behaviors in research that involve human subjects. It includes three ethical principles that must be taken into account when using human subjects for research:

- Respect for persons: individuals should be treated as autonomous agents and people with diminished autonomy are entitled to protection

- Beneficence: maximizing benefits and minimizing possible harms in human experimentation, that is, acting in the best interest of the participant

- Justice: informed consent, assessment of risks and benefits, fair treatment, and unbiased selection of subjects.

The Belmont Report provides the moral framework for understanding regulations on the use of humans in experimental methods in the United States.

Food and Drug Administration regulations

The Food and Drug Administration (FDA) is the highest authority of human subjects protection in research in the United States. The FDA regulations for the conduct of clinical trials have been in effect since the 1970s. These regulations require informing participants in an experiment that they could be used as control subjects or given a placebo, and that in certain cases alternative therapies may exist, and obtaining their written consent.

Ethics committees

To protect the rights and well-being of research participants, and at the same time allow obtaining meaningful results and insights into human behavior, all current biomedical and psychological research must go through a strict ethical review process.

Ethics committees assess and review trial designs. They approve, review, and monitor all research involving humans. Their task is to verify that subjects are not exposed to any unnecessary risks according to the key ethical guidelines including the assurance of confidentiality, informed consent, and debriefing.

Ethics committees in the European Union are bodies responsible for oversight of medical or human research studies in EU member states.

Institutional review boards

In the United States, ethics committees are usually known as institutional review boards. Institutional review boards (IRB), also called ethical review boards, are independent ethics committees that review Health and Human Services research proposals involving human subjects. The aim of the institutional review board is to ensure that the proposals meet the ethical foundations of the regulations.

Any study conducted by a university or research organization has to be approved by an institutional review board, often even before investigators can apply for funding. This is the case for any research in anthropology, economics, political science, and sociology as it is for clinical or experimental research in medicine and psychology.