If you're on this page, you're probably researching B.F. Skinner and his work on operant conditioning! You might be surprised to see how much conditioning you go through each day! We are conditioned to behave in certain ways every day. Our brains naturally gravitate toward the things that bring us pleasure and back away from things that bring us pain. When we connect our behaviors to pleasure and pain, we become conditioned.

When people are subjected to reinforcements (pleasure) and punishments (pain), they undergo operant conditioning. This article will describe operant conditioning, how it works, and how different schedules of reinforcement can increase the rate at which subjects perform a certain behavior.

What is Operant Conditioning?

Operant conditioning is a system of learning that happens by changing external variables called 'punishments' and 'rewards.' Throughout time and repetition, learning happens when an association is created between a certain behavior and the consequence of that behavior (good or bad).

You might also hear this concept as “instrumental conditioning” or “Skinnerian conditioning.” This second term comes from BF Skinner, the behaviorist who discovered operant conditioning through this work with pigeons.

He created what is now known as the “Skinner box,” a device that contained a lever, disc, or other mechanism. Something would occur when the levers were pulled or the discs were pressed. Food would appear, lights would flash, the floor would become electric, etc.

Skinner placed pigeons inside these boxes to record their responses based on whether or not they were conditioned to the responses that occurred after completing a certain task.

Based on how the pigeons understood the consequences of their actions, and changes to their behavior, Skinner developed the idea of operant conditioning.

How Does Operant Conditioning Work?

We can unearth the definition of operant conditioning by breaking it down. Skinner defined an operant as any "active behavior that operates upon the environment to generate consequences." You get a big hug whenever you tell your mother she looks pretty. That compliment is an operant.

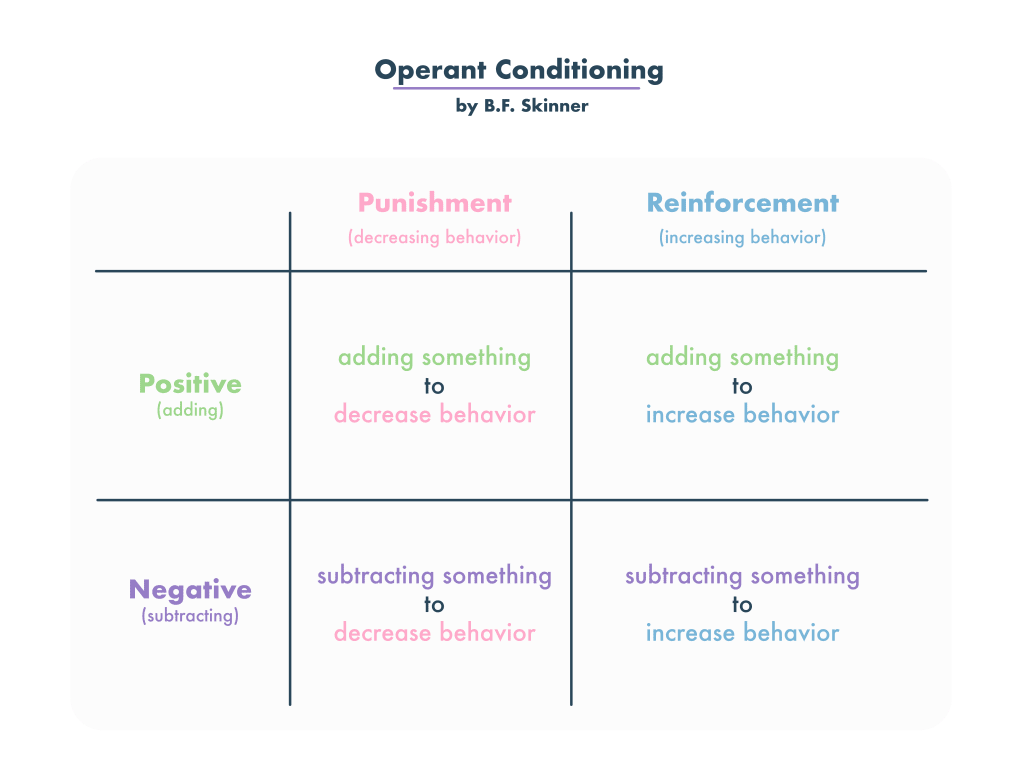

In operant conditioning, you can change two variables to achieve two goals.

The variables you can change are adding a stimulus or removing a stimulus.

The goals you can achieve are increasing a behavior or decreasing a behavior.

Depending on what goal you're trying to achieve and how you manipulate the variable, there are four methods of operant conditioning:

- Positive Reinforcement

- Negative Reinforcement

- Positive Punishment

- Negative Punishment

|

Operant Conditioning |

Increase Behavior |

Decrease Behavior |

|---|---|---|

| Add Stimulus | Positive Reinforcement | Positive Punishment |

Remove Stimulus | Negative Reinforcement | Negative Punishment |

Remembering operant conditioning types can be difficult, but here's a simple cheat sheet to help you.

Reinforcement is increasing a behavior.

Punishment is decreasing behavior.

The positive prefix means you're adding a stimulus.

The negative prefix means you're removing the stimulus.

Reinforcement

Positive reinforcement sounds redundant - isn’t all reinforcement positive? In psychology, “positive” doesn’t exactly mean what you think it means. The term “positive reinforcement” simply refers to the idea that you have added stimulus to increase a behavior. Dessert after finishing your chores is positive reinforcement.

Negative reinforcement is the removal of a stimulus to reinforce a behavior. It’s not always a negative experience. Removing debt from your account is considered negative reinforcement. A night without chores is also a negative reinforcement.

Under the umbrella of negative reinforcement are escape and active avoidance. These types of negative reinforcement condition your behavior through the threat or existence of “bad” stimuli.

Escape Learning

Escape learning is a crucial adaptive mechanism that enables a subject to minimize or prevent exposure to aversive stimuli. By understanding the dynamics of escape learning, we can gain insights into how organisms, including humans, respond to threatening or harmful situations. In Martin Seligman's experiments with dogs, the principle illustrated how the dogs learned to change their behavior to escape a negative stimulus. This form of learning highlights the ways in which adverse conditions can motivate behaviors that alleviate discomfort or pain.

Active Avoidance Learning

Active avoidance learning is not just a theoretical concept; it has real-world applications in understanding our daily behaviors and decision-making processes. By recognizing the patterns in which we actively avoid negative stimuli, therapists and educators can design interventions to help individuals address anxieties or phobias. For instance, we actively prevent discomfort by putting on a coat to avoid the cold. Recognizing these patterns provides a foundational understanding of how humans often make proactive choices based on past experiences to avoid potential future discomforts. This proactive behavior adjustment plays a significant role in shaping our daily decisions and habits.

Escape and active avoidance learning are integral to understanding human behavior. They offer insights into how we navigate our environment, respond to threats, and proactively shape our actions to avoid potential negative outcomes.

Punishment

In Operant Conditioning, Punishment is described as changing a stimulus to decrease the likelihood of a behavior. Like reinforcement, there are two types of punishment: positive and negative.

Positive punishment is not a positive experience - it discourages the subject from repeating their behaviors by adding stimulus.

In The Big Bang Theory, Sheldon and the gang try and devise a plan to avoid getting off-topic. They decide to introduce a positive punishment to discourage that behavior.

The characters decide to put pieces of duct tape on their arms. When one of them gets off-topic, another person in the group would rip the duct tape off that person’s arm as a form of operant conditioning. Adding that painful feeling makes their scheme a form of positive punishment.

Negative punishment takes something away from the subject to help discourage behavior. If your parents ever took away your access to video games or toys because you were behaving badly, they were using negative punishment to discourage you from bad behavior.

Measuring Response and Extinction Rates

Getting spanked for bad behavior once will not stop you from trying to get away with bad behavior. Feeling cold outside and warmer once you put on a coat will not teach you to put on a coat every time you go outside.

Researchers use two measurements to determine the effectiveness of different operant conditioning schedules: response rate and extinction rate.

The Response Rate is how often the subject performs the behavior to receive the reinforcement.

The Extinction Rate is quite different. If the subject doesn’t trust that they will get a reinforcement for their behavior or does not make the connection between the behavior and the consequence, they are likely to quit performing the behavior. The extinction rate is when that behavior ends after reinforcements are not given.

Schedules of Reinforcement

How fast does operant conditioning happen? Can you manipulate response and extinction rates? The answer varies based on when and why you receive your reinforcement.

Skinner understood this. Throughout his research, he observed that the timing and frequency of reinforcement or punishment greatly impacted how quickly the subject learned to perform or refrain from a behavior. These factors also make an impact on response rate.

The different times and frequencies in which reinforcement is delivered can be identified by one of many schedules of reinforcement. Let’s look at those different schedules and how effective they are.

Continuous reinforcement

If you think about the simplest form of operant conditioning, you are probably thinking of continuous reinforcement. When the subject performs a behavior, they earn a reinforcement. This occurs every single time.

While the response rate is fairly high initially, extinction occurs when continuous reinforcement stops. If you earn dessert every time you clean your room, you will clean your room when you want dessert. But if you clean your room and don’t earn dessert one day, you will lose trust in the reinforcement, and the behavior will likely stop.

The next four reinforcement schedules are called partial reinforcement. Reinforcements are not delivered every single time a behavior is performed. Instead, reinforcements are distributed based on the amount of behaviors performed or time passed.

Fixed ratio reinforcement

“Ratio” refers to the amount of responses. “Fixed” refers to a consistent amount. Put them together, and you get a schedule of reinforcement with a consistent amount of responses. Rewards programs often use fixed ratio reinforcement schedules to encourage customers to return. For every ten smoothies, you get one free.

Every time you spend $100, you get $20 off on your next purchase. The free smoothie and reduced purchases are both reinforcements distributed after a consistent amount of behaviors. It could take a subject two years or two weeks to reach that tenth smoothie - either way, the reinforcement is distributed after that tenth purchase.

The rate of response becomes more rapid as subjects endure fixed ratio reinforcement. Think about people in sales who work on commission. They know they will get a $1,000 paycheck for every five items they sell - you can bet that they are pushing hard to sell those five items and earn that reinforcement faster.

Fixed interval reinforcement

Whereas “ratio” refers to the amount of responses, “interval” refers to the timing of the response. Subjects receive reinforcement after a certain amount of time has passed. You experience fixed interval reinforcement when you receive a paycheck on the 15th and 30th of every month, regardless of how often you perform a behavior.

The response rate is typically slower in situations with fixed interval reinforcement. Subjects know they will receive a reward no matter how often they behave. People in jobs with steady and consistent paychecks are often less likely to push hard and sell more products because they know they will get the same paycheck no matter how many items they sell. Other factors, like bonuses or verbal reprimands, may impact their motivation, but those extra factors don’t exist in pure fixed interval reinforcement.

Variable ratio reinforcement

When discussing reinforcement schedules, “variable” refers to something that varies after a reinforcement is given.

Let’s go back to the example of the rewards card. On a variable ratio reinforcement schedule, the subject would receive their first free smoothie after buying ten smoothies. Once they get that first free smoothie, they only have to buy seven for another free smoothie. After that reinforcement is distributed, the subject has to buy 15 smoothies to get a free smoothie. The ratio of reinforcement is variable.

This type of schedule isn’t always used because it can be confusing - in many cases, the subject does not know how many smoothies they must purchase before getting their free one.

However, response rates are high for this type of schedule. The reinforcement is dependent on the subject’s behavior. They know they are one step closer to their reward by performing one more behavior. If they don’t get the reinforcement, they can perform one more behavior and again become one step closer to getting the reinforcement.

Think of slot machines. You never know how often you must pull the level before winning the jackpot. But you know you are one step closer to winning with every pull. At some point, if you just keep pulling, you will win the jackpot and receive a big reinforcement.

Variable interval reinforcement

The final reinforcement schedule identified by Skinner was that of variable interval reinforcement. By now, you can probably guess what this means. Variable interval reinforcement occurs when reinforcements are distributed after a certain amount of time has passed, but this amount varies after each reinforcement is distributed.

In this example, let’s say you work at a retail store. At any given time, secret shoppers enter the store. If you behave correctly and sell the right items to the secret shopper, the higher-ups give you a bonus.

This could happen anytime as long as you are performing the behavior. This schedule keeps people on their toes, encouraging a high response rate and low extinction rate.

FAQs About Operant Conditioning

Is Operant Conditioning Trial and Error?

Not exactly, although trial and error helped psychologists recognize operant conditioning. Through trial and error, it was discovered that reinforcements and rewards helped behaviors stick. These reinforcements (praise, treats, etc.) are the key to behaviors being performed and even repeated.

Is Operant Conditioning Behaviorism?

Behaviorism is an approach to psychology; think of operant conditioning as a theory under the umbrella of behaviorism. B.F. Skinner is considered one of the most important Behaviorists in the history of psychology. For decades, theories like operant conditioning and classical conditioning have helped shape how people approach behavior.

Differences Between Operant Conditioning vs. Classical Conditioning

Classical conditioning ties existing behaviors (like salivating) to stimuli (like a bell). “Classical Connects.” Operant conditioning trains an animal or human to perform or refrain from certain behaviors. You don’t train a dog to salivate, but you can train a dog to sit by giving him treats when he sits.

Operant Conditioning vs. Instrumental Conditioning

Operant conditioning and instrumental conditioning refer to the same process. You are more likely to hear the term "operant conditioning" in psychology and "instrumental conditioning" in economics! However, they differ from another type of conditioning: classical conditioning.

Can Operant Conditioning Be Used in the Classroom?

Yes! Intentionally rewarding students for their behavior is a form of operant conditioning. If students receive praise every time they get an A, they are likelier to strive for an A on their tests and quizzes.

Everyday Examples of Operant Conditioning

You can probably think of ways you have used operant conditioning on yourself, your child, or your pets! Reddit users see operant conditioning in video games and pet training, ...

Post from iurichibaBR in r/FFBE (Final Fantasy Brave Exvius)

When you think about FFBE, what's the first thing that comes to mind? Most of you would probably answer CRYSTALS, PULLING, RAINBOWS, EVE! That's a clear example of Operant Conditioning. You wanna play the game every day and get that daily summons because you know you may get something awesome! And that's also why the Rainbow rates are low -- if you won them too frequently, it would lose its effect.

A cute example of operant conditioning from Narwahl_in_spaze in r/ABA (Applied Behavior Analysis)

Post from barbiegoingbad in r/Diabla

Now that Mary knows the basketball player is in the game for fame, she uses this to her advantage. Every time he does something desirable, she uses this as a reinforcement for him to continue and upgrade this behavior. After their first date went well, they went to an event together. She knows he wants adulation and to feel important, so she puts the spotlight on him and makes him look good in front of others whenever he goes out of his way to provide for her. This subconsciously makes him feel good, so he continues to provide her with what she wants and needs (in her case, gifts, money, and affection.)

Using Operant Conditioning On Yourself

We are used to operant conditioning forms set up by the natural world or authority figures. But you can also use operant conditioning on yourself or with accountability.

Here’s how you can do it yourself. You set up a fixed ratio reinforcement schedule: for every 10 note cards you write or memorize, you give yourself an hour of video games. You can set up a fixed interval reinforcement schedule: after every week of finals, you take a vacation.

Accountabilibuddies are best for setting up variable ratio and variable interval reinforcement schedules. That way, you don’t know when the reinforcement is coming. Tell your buddy to give you your video game controller back after a random amount of note cards that you write. Or, ask them to walk into your room at random intervals. If you’re studying, they hand you a beer. If you’re not, no reinforcement.